What Are High Chromium Castings and How Do You Maximize Their Wear Life?

What Is High Chromium Cast Iron and Why Does It Resist Wear?

High chromium cast iron is a ferrous alloy containing 11 to 30 percent chromium and 2.0 to 3.5 percent carbon, with the chromium and carbon combining during solidification to form chromium carbides of the M7C3 type. These carbides have a Vickers hardness of 1,400 to 1,800 HV, making them among the hardest phases found in any engineering material short of tool grade ceramics. The surrounding metallic matrix, typically martensitic after appropriate heat treatment, provides toughness that prevents the brittle fracture that would destroy a ceramic material under the same impact conditions.

The bulk hardness of a heat treated high chromium white iron casting is typically 58 to 66 HRC (Rockwell C scale), compared to 35 to 45 HRC for heat treated tool steel and 180 to 220 HB for standard gray iron used in general engineering castings. This substantial hardness advantage translates directly into abrasive wear resistance: in the Miller number abrasion test and ASTM G65 dry sand rubber wheel test, high chromium white irons consistently show 3 to 10 times lower volume loss than standard gray iron and 2 to 5 times lower volume loss than hardened steel in the same test conditions.

The Role of Chromium Content in Wear Performance

The chromium content of the alloy determines the type, volume fraction, and distribution of the carbides that form during solidification, and it also determines the corrosion resistance of the metallic matrix. In alloys with 11 to 14 percent chromium, the carbide volume fraction is relatively low (15 to 20 percent) and the matrix is more susceptible to corrosion in acidic slurry environments. As chromium content increases toward 25 to 30 percent, the carbide volume fraction increases to 25 to 35 percent, and the chromium content of the matrix increases to a level that provides meaningful corrosion resistance in moderately aggressive environments.

The 25 to 28 percent chromium grades, often designated as Cr26 or conforming to the ASTM A532 Class III Type A specification, are the most widely used for severe combined abrasion and corrosion service in mining slurry applications, while the 15 to 18 percent chromium grades (Cr15, ASTM A532 Class II Type E) offer a good balance of hardness, toughness, and cost for dry abrasion service in crushers and mills. Selecting the appropriate chromium grade for the specific application is the first engineering decision in specifying high chromium castings, and it has a larger effect on service life than any subsequent heat treatment or operational parameter.

Alloying Additions That Modify Performance

Beyond chromium and carbon, high chromium cast iron compositions are modified by several additional alloying elements that refine the microstructure, improve hardenability, or enhance specific properties:

- Molybdenum (0.5 to 3.0 percent): Enhances hardenability, ensuring that the martensitic transformation is complete throughout thick sections during air or oil quench heat treatment. Without molybdenum, thick cross section castings may retain austenite in the core, reducing hardness in the regions that experience the deepest wear over time as the surface wears away. Molybdenum is a standard addition in castings for applications requiring consistent hardness throughout sections exceeding 50 millimeters.

- Manganese (0.5 to 1.5 percent): Increases austenite stability, which can be beneficial for controlling the retained austenite level in the as cast condition before heat treatment, but excessive manganese destabilizes martensite formation during heat treatment and should be kept within the specified range for the target grade.

- Nickel (0.5 to 1.5 percent): Used in combination with molybdenum to enhance hardenability in grades where molybdenum alone is insufficient for very thick sections or where molybdenum cost is being controlled. Nickel has a lesser effect on hardenability per unit addition than molybdenum but is less expensive, making the combination economically optimum for many commercial casting alloys.

- Copper (0.5 to 1.2 percent): Promotes pearlite suppression and improves matrix hardness in some lower chromium grades. Copper additions are more common in Cr15 alloys where the hardenability from chromium alone may be borderline for air hardening of medium section thicknesses.

Advantages of High Chromium Cast Iron Over Standard Castings

The performance advantages of high chromium cast iron over the standard gray iron, ductile iron, and carbon steel castings used in general engineering applications are most clearly demonstrated by comparing specific wear rate data from service trials and standardized laboratory tests in the same application conditions. The following comparison addresses the key advantage categories that drive the specification of high chromium castings in industrial wear applications.

Abrasive Wear Life Comparison

In high stress abrasion service with coarse, hard abrasive particles (granite, quartzite, iron ore, and similar hard rock abrasives with Mohs hardness above 6), high chromium white iron castings routinely achieve 3 to 8 times the service life of equivalent components made from standard gray iron. Against hardened medium carbon steel (350 to 400 HB), the advantage is typically 2 to 4 times, depending on the abrasive particle hardness and the stress conditions. In low stress abrasion with fine, soft abrasive particles, the wear life advantage is more modest, in the range of 1.5 to 2.5 times, because the finer particles are less effective at penetrating the hard carbide surface and the advantage of the carbide microstructure over a hard martensite matrix is smaller.

In a published service trial in a limestone crushing application, Cr26 high chromium iron blow bars in a horizontal shaft impact crusher achieved 850 metric tonnes of limestone per kilogram of blow bar wear, compared to 210 metric tonnes per kilogram for hardened steel blow bars of equivalent geometry in the same crusher processing the same feed. This represents a 4 fold wear life advantage that, after accounting for the higher unit cost of the high chromium castings, produced a 60 percent reduction in cost per tonne of crushed product from the blow bar wear budget alone.

Corrosion Abrasion Combined Resistance

In wet processing applications where abrasive slurry contacts the wearing surface, the synergistic effect of simultaneous abrasion and corrosion accelerates wear at a rate greater than the sum of the two mechanisms acting independently. The passive chromium oxide layer that forms on the surface of high chromium cast iron (particularly the Cr26 grades with matrix chromium content exceeding 13 percent) provides meaningful corrosion protection that retards this synergistic acceleration, making the combined corrosion abrasion service life advantage of high chromium iron over unprotected carbon steel significantly greater than the dry abrasion advantage alone.

In acidic mineral slurry applications with pH values between 4 and 6, where corrosion is a significant wear mechanism, Cr26 high chromium iron pump impellers and liners have demonstrated service lives 5 to 10 times longer than carbon steel equivalents, compared to the 2 to 4 times advantage seen in dry abrasion applications with similar particle hardness and impact conditions.

Wear Comparison Table: Material Grades in Abrasive Service

| Material | Typical Hardness | Relative Wear Life (High Stress Abrasion) | Best Application Conditions |

|---|---|---|---|

| Gray iron (Grade 250) | 180 to 220 HB | 1.0 (reference) | Low abrasion, general engineering |

| Ductile iron (Grade 400) | 200 to 280 HB | 1.2 to 1.5 | Moderate impact, low abrasion |

| Hardened carbon steel (Mn Cr) | 350 to 420 HB | 2.0 to 3.0 | High impact, moderate abrasion |

| High Mn austenitic steel (Hadfield) | 200 HB (work hardens to 500 HB) | 2.5 to 4.0 | Very high impact, moderate abrasion |

| High Cr iron (Cr15, ASTM A532 Class II) | 58 to 63 HRC | 4.0 to 6.0 | High abrasion, moderate impact, dry service |

| High Cr iron (Cr26, ASTM A532 Class III) | 60 to 66 HRC | 5.0 to 8.0 | High abrasion, corrosive slurry, mining |

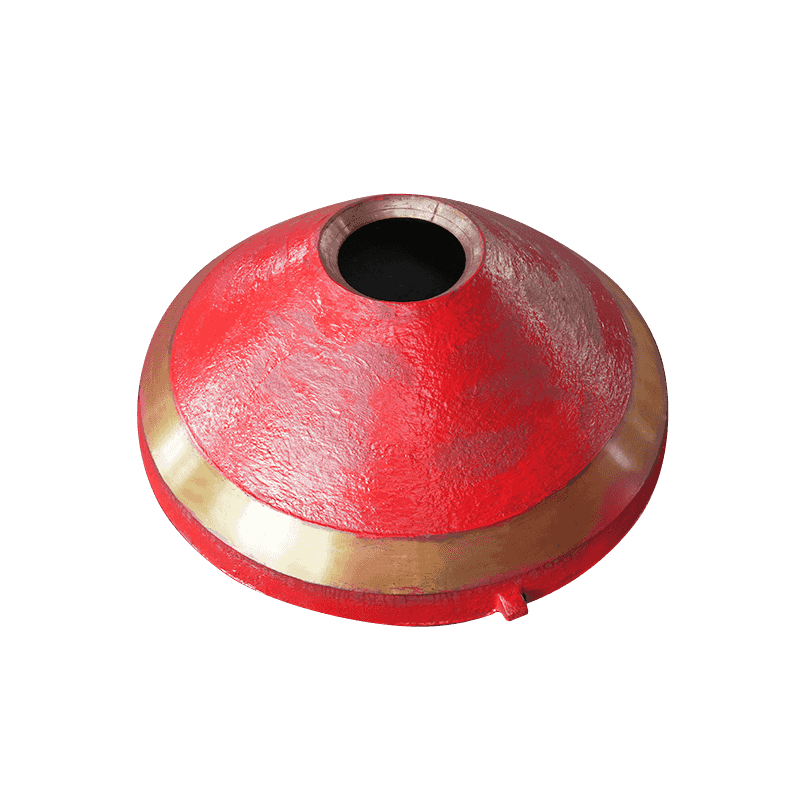

Crusher High Chromium Castings: Impact Crusher Applications

Impact crushers, including horizontal shaft impactors (HSI) and vertical shaft impactors (VSI), subject their wear components to a particularly demanding combination of high velocity impact and abrasive sliding. The primary wearing components in horizontal shaft impact crushers are the blow bars, the apron liners (also called impact plates or breaker plates), and the side liners. In vertical shaft impactors, the key wear components are the rotor shoes, anvils, and feed tube liners. High chromium cast iron is the standard material specification for all of these components in medium and hard rock crushing applications.

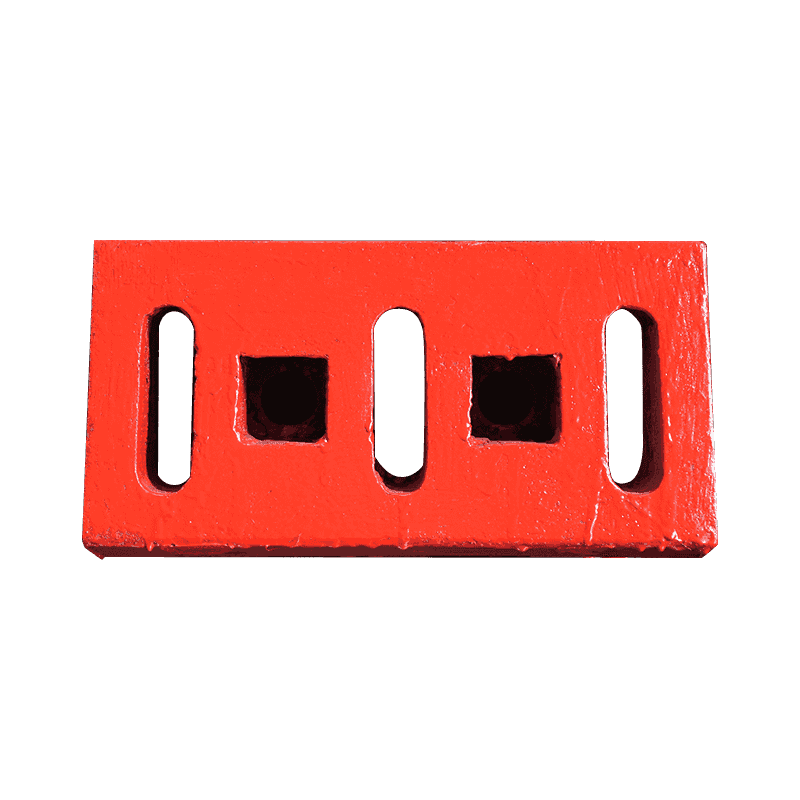

Blow Bars for Horizontal Shaft Impact Crushers

The blow bar is the primary crushing element in a horizontal shaft impactor, rotating with the rotor at tip speeds of 25 to 45 meters per second and repeatedly impacting feed rock at high velocity. The blow bar must resist both the high energy impact of the initial rock strike and the subsequent abrasive sliding of broken rock fragments along the bar's working face as material is accelerated through the crushing chamber. This combination of impact and abrasion requires a material that offers both adequate toughness to survive the impact loads without brittle fracture and high hardness to resist the abrasive sliding wear.

The optimal blow bar material for limestone, sandstone, and similar medium hardness feed materials is typically Cr26 or Cr20 high chromium iron with a heat treated hardness of 60 to 65 HRC, which provides the best combination of wear life and fracture resistance in this service. For harder, more abrasive feed materials such as granite, quartzite, and iron ore, the chromium content may be increased toward 28 to 30 percent, and additional molybdenum (1.5 to 2.5 percent) is used to ensure full martensite transformation throughout the blow bar section thickness of typically 80 to 150 millimeters.

For highly abrasive feed materials with silica content above 60 percent (such as quartzite and silica sand), composite blow bars with a high chromium iron insert cast into a ductile iron or steel backing body are used to combine the wear resistance of high chromium iron on the working face with the toughness of ductile iron or steel at the attachment points, where brittle fracture of a full high chromium iron section could cause catastrophic bar loss.

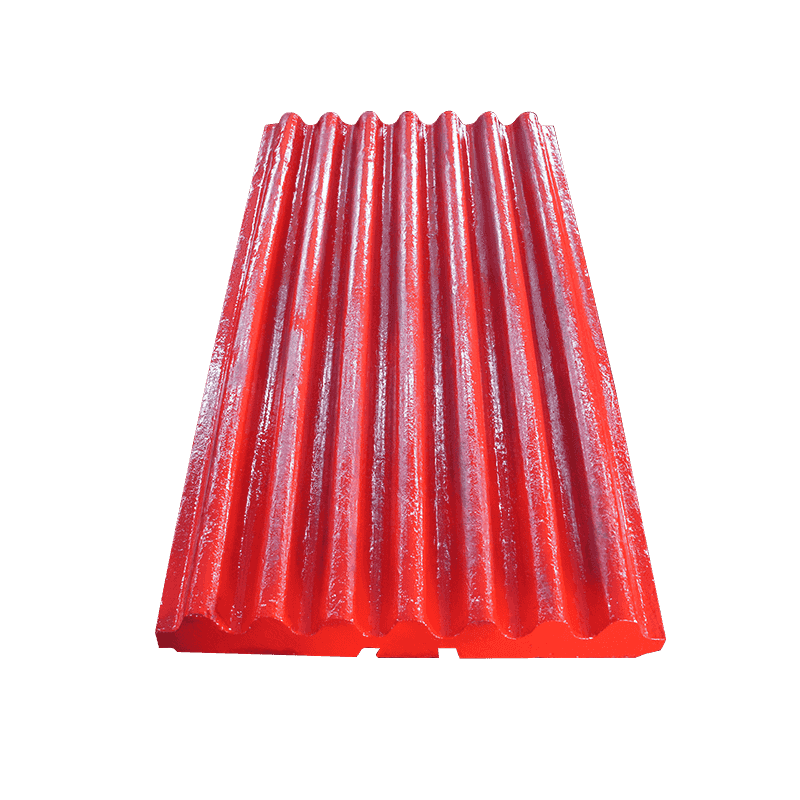

Apron Liners and Side Liners

The apron liners in a horizontal shaft impactor form the secondary impact surfaces that rock strikes after being thrown from the rotor. These liners experience lower velocity impacts than blow bars but still require high hardness to resist the abrasive wear from rock sliding along their surfaces between impacts. High chromium iron liners of the Cr15 or Cr20 grade are standard for limestone and medium hard rock applications; for harder rock, Cr26 grade may be selected. The side liners, which contain material within the crushing chamber and guide the crushed product toward the discharge opening, experience primarily abrasive sliding wear with less impact, and Cr15 grade is adequate for most side liner applications regardless of rock hardness.

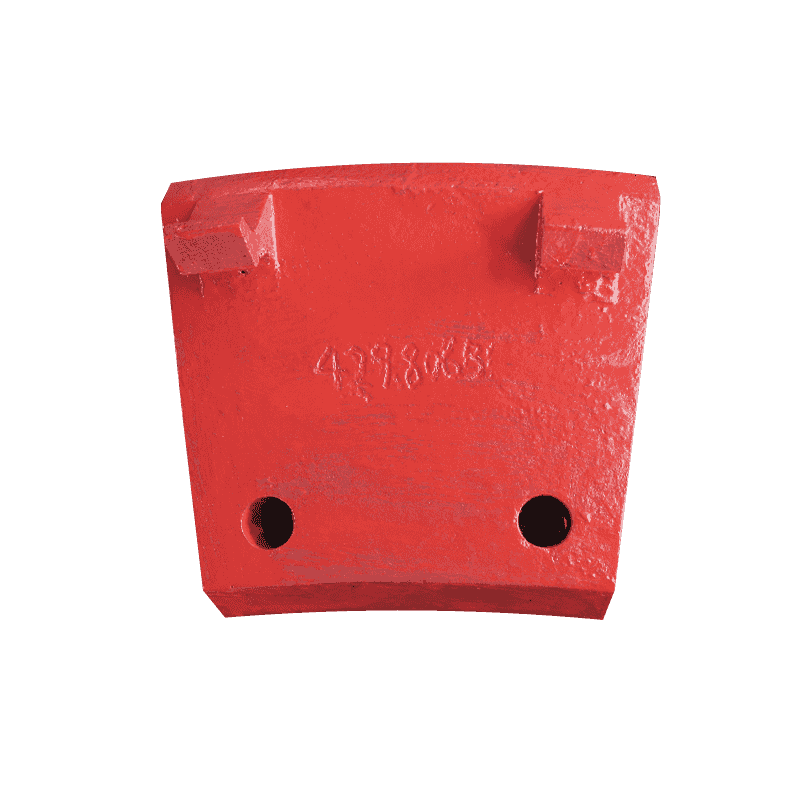

VSI Rotor Components

Vertical shaft impactors operate by accelerating feed material through a rotor to speeds of 45 to 75 meters per second before it impacts a surrounding ring of anvils or a rock shelf. The rotor shoes (the components that accelerate material through the rotor) and the anvils (the fixed impact targets) experience extremely aggressive combined impact and abrasion. VSI rotor shoes in hard rock applications are typically Cr26 or Cr28 grade with hardness of 63 to 66 HRC, and they are replaced at intervals of 100 to 400 hours depending on rock hardness and abrasivity index. The high replacement frequency of VSI wear parts makes the economics of material selection extremely sensitive to unit cost per hour of service, and the price performance ratio of different high chromium iron grades and competitor materials is evaluated on cost per tonne of processed product rather than unit price alone.

Vertical Grinding Mill High Chromium Castings

Vertical grinding mills (also called vertical roller mills or VRM) grind raw material, clinker, slag, and coal by pressing and rolling feed material between rotating grinding rollers and a stationary or rotating grinding table. The contact pressures between roller and table exceed 200 megapascals in modern high efficiency VRM designs, and the combination of high normal stress, abrasive sliding at the roller to table contact zone, and the thermal effects of high speed grinding generates among the most severe wear conditions encountered by any industrial casting.

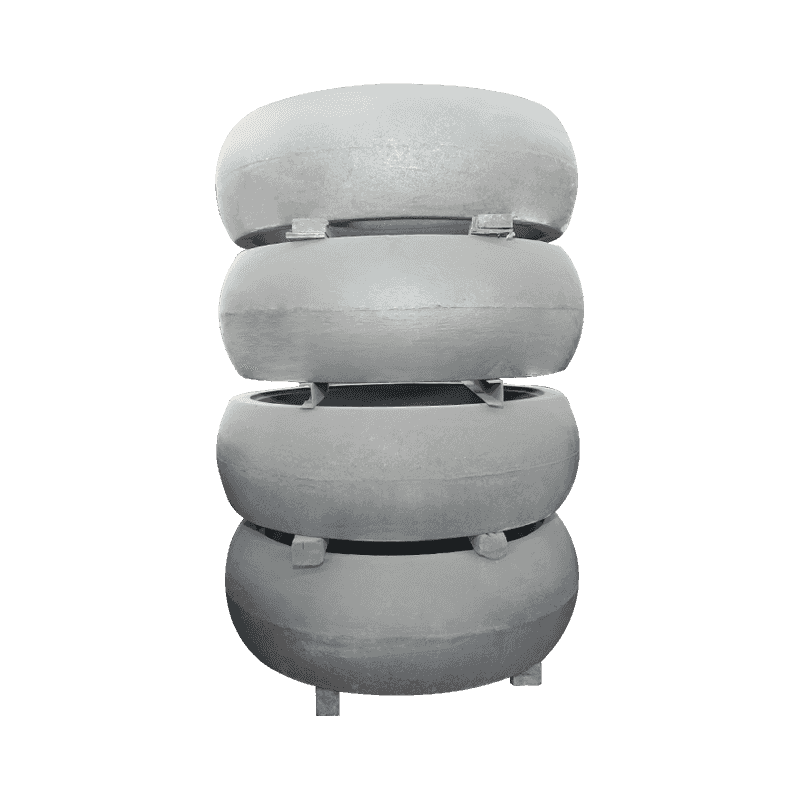

Grinding Roller Tires and Table Segments

The grinding roller tire (the replaceable outer shell of the grinding roller) and the grinding table segments (the wear resistant liner segments bolted to the grinding table) are the primary wearing components in a vertical grinding mill. Both components are typically cast from high chromium iron, with the specific grade selected based on the material being ground and the specific VRM design's operating parameters.

For cement raw material and clinker grinding, where moderate hardness feed (Mohs 3 to 5) is processed at high throughput rates, Cr15 to Cr20 grade high chromium iron is standard for both roller tires and table segments, delivering service lives of 8,000 to 15,000 operating hours before replacement is required. For slag grinding, where granulated blast furnace slag is significantly harder and more abrasive than cement clinker (Mohs hardness 6 to 7 for some slag types), Cr26 grade is preferred, and service lives of 6,000 to 10,000 hours are typical depending on slag characteristics.

The size of VRM roller tires and table segments creates significant casting challenges because sections of 100 to 250 millimeters thickness must achieve uniform hardness throughout to prevent the accelerated wear that occurs when a softer core is exposed as the initial hard surface layer wears away. This requires careful alloy design with adequate hardenability (achieved through molybdenum and nickel additions as described above) and controlled heat treatment procedures that achieve the required cooling rate throughout the entire section thickness.

Coal Mill Grinding Elements

Coal pulverizers used in power generation plants grind coal to a fine powder before injection into boiler furnaces. The grinding elements (bowl liners, roll shells, and table segments) in coal pulverizers operate in an environment of simultaneous abrasion from coal and mineral inclusions, thermal cycling from the hot air used to dry coal during grinding, and potential explosive ignition risk from coal dust accumulation. High chromium cast iron is the standard grinding element material for all major bowl mill and roller mill designs used in power generation, with Cr15 grade being most common and Cr26 grade used for highly abrasive coals with high mineral matter content (ash content above 20 percent).

VRM Application Summary by Ground Material

| Ground Material | Typical Mohs Hardness | Recommended Cr Grade | Typical Service Life (Hours) | Key Alloying Additions |

|---|---|---|---|---|

| Soft coal (low ash) | 1 to 2 | Cr15 | 12,000 to 18,000 | Mo 0.5 to 1.0% |

| Hard coal (high ash) | 3 to 5 | Cr20 to Cr26 | 6,000 to 12,000 | Mo 1.0 to 2.0%, Ni 0.5 to 1.0% |

| Cement raw material | 3 to 5 | Cr15 to Cr20 | 8,000 to 15,000 | Mo 0.5 to 1.5% |

| Clinker (cement) | 5 to 6 | Cr20 to Cr26 | 6,000 to 10,000 | Mo 1.0 to 2.5%, Ni 0.5 to 1.0% |

| Blast furnace slag | 6 to 7 | Cr26 to Cr28 | 4,000 to 8,000 | Mo 2.0 to 3.0%, Ni 1.0 to 1.5% |

How to Improve the Wear Resistance of High Chromium Castings

Wear resistance in high chromium castings is not a fixed property determined by chemistry alone. It is the outcome of the entire production process from alloy design through melting, solidification, and heat treatment, and it can be substantially improved through targeted interventions at each stage. Understanding which variables have the largest effect on wear performance allows foundries and end users to make well directed improvements rather than applying general quality improvements that may not address the specific limiting factor in their application.

Heat Treatment: The Single Most Impactful Variable

The heat treatment of high chromium white iron castings is the single production step with the largest effect on the final wear resistance of the casting. The purpose of heat treatment is to transform the metallic matrix from its as cast condition (a mixture of austenite, carbides, and often some pearlite or martensite depending on the alloy and cooling rate) to a fully martensitic condition that provides both maximum hardness and the toughness needed to resist fracture under impact loading.

The standard heat treatment cycle for high chromium white iron consists of two stages:

- Destabilization (austenitizing) treatment: The casting is heated to a temperature in the range of 950 to 1,060 degrees Celsius (the precise temperature depending on the alloy grade and the carbide dissolution target) and held at this temperature for a period of 2 to 6 hours depending on section thickness. During this hold, secondary carbides that precipitated during solidification are partially dissolved back into the austenite matrix, enriching the matrix in chromium and carbon and ensuring a uniform starting condition for the subsequent hardening step. The destabilization temperature must be precisely controlled within plus or minus 10 degrees Celsius to avoid under dissolving secondary carbides (which leaves the matrix too lean for full hardenability) or over dissolving primary carbides (which coarsens the carbide structure and reduces wear resistance).

- Air or oil quench: After the destabilization hold, the casting is transferred rapidly to the quench medium. Air quench (forced air cooling) is sufficient for most alloys with adequate hardenability additions (molybdenum above 1.5 percent for sections up to 100 millimeters); oil quench is used for alloys with lower hardenability or for very thick sections where air cooling is too slow to suppress pearlite formation. The goal is to cool through the pearlite nose temperature (approximately 550 to 650 degrees Celsius) quickly enough to suppress pearlitic transformation and achieve a fully martensitic matrix, while cooling slowly enough at the martensite finish temperature (below 200 degrees Celsius) to avoid quench cracking from thermal stress.

Following the hardening treatment, a stress relief temper at 200 to 260 degrees Celsius for 2 to 4 hours is applied to reduce internal stresses developed during the rapid cooling, improving fracture resistance without significantly reducing matrix hardness.

Microstructure Refinement During Solidification

The carbide size and distribution achieved during solidification set the upper limit on wear resistance that even perfect heat treatment cannot exceed. Coarse, poorly distributed carbides provide less effective barrier to abrasive wear than fine, uniformly distributed carbides of the same total volume fraction, because coarse carbides allow larger abrasive particles to find matrix material between carbides to cut through, while fine carbides present an effectively uniform hard surface to the abrasive.

Carbide refinement can be achieved through:

- Controlled pouring temperature: Pouring at the lowest acceptable temperature for complete mold filling (typically 1,350 to 1,420 degrees Celsius for high chromium iron) increases undercooling during solidification, which promotes finer carbide nucleation and a finer final carbide size. Excessive pouring temperature coarsens the solidification microstructure and produces larger primary carbides.

- Inoculation: Small additions of titanium (0.05 to 0.1 percent), vanadium (0.1 to 0.3 percent), or other carbide forming elements provide additional nucleation sites for carbide formation during solidification, producing a finer and more uniform carbide distribution. The improvement in wear resistance from inoculation alone (without other changes) has been quantified at 10 to 20 percent in laboratory wear tests comparing inoculated and non inoculated heats of the same nominal composition.

- Controlled solidification rate: Faster solidification produces finer carbides. For small to medium castings, using metal chills at the working surface positions in the mold increases the cooling rate at those surfaces, producing finer carbides in the areas that experience the most wear in service.

Retained Austenite Management

After standard heat treatment, most high chromium white iron castings contain 5 to 20 percent retained austenite in the matrix, depending on the alloy composition and heat treatment parameters. Retained austenite is a softer phase (approximately 300 to 400 HV) than martensite (800 to 1,000 HV), and high levels of retained austenite reduce the matrix hardness and abrasive wear resistance of the casting. In applications where maximum abrasive wear resistance is required and impact loading is modest, the retained austenite content should be minimized to below 10 percent through one of the following approaches: cryogenic treatment at minus 70 to minus 196 degrees Celsius after the normal heat treatment, subcooling to temperatures below the martensite finish temperature, or compositional adjustment to lower the martensite start temperature.

In applications with significant impact loading, some level of retained austenite (10 to 20 percent) is beneficial because it provides crack arrest toughness that prevents impact initiated microcracks from propagating through the casting. The optimal retained austenite level is therefore application specific, and it represents a wear resistance versus toughness tradeoff that must be resolved based on the dominant failure mode in the specific service environment.

How to Maintain High Chromium Castings for Long Life

Maintenance of high chromium castings in crusher and grinding mill applications encompasses both the operational practices that preserve the integrity of installed wear parts and the monitoring and replacement planning practices that maximize total useful life from each part without incurring the production losses and mechanical damage that occur when parts are worn past their serviceable limit before replacement. The following maintenance framework addresses both dimensions.

Operational Practices That Preserve Casting Integrity

The way a crusher or grinding mill is operated has a direct effect on the wear rate and fracture incidence of its high chromium castings, and operational discipline around the following practices produces measurable improvements in casting service life:

- Control tramp metal and uncrushable material in the feed: Steel reinforcement bar, excavator teeth, and other tramp metal in crusher feed creates extreme impact events that can fracture high chromium iron blow bars, apron liners, and VSI shoes even if the normal operating impact level is within the material's toughness range. Install and maintain effective tramp metal detection (typically metal detectors and magnet systems appropriate to the material type) upstream of all impact crushers processing mixed or demolition derived feed streams.

- Control feed size and feed rate: Oversized feed in an impact crusher creates higher than design impact events that accelerate wear and increase fracture risk. Maintain pre crusher scalping and sizing screens in good working condition to ensure that crusher feed conforms to the nominal feed size specification for which the blow bars and liners were designed. Feed rate surges that cause the rotor to impact massive material accumulations rather than individual particles similarly increase peak impact loads; use controlled feed systems (belt feeders with speed control rather than surge hoppers with uncontrolled discharge) to maintain consistent feed rate.

- Maintain correct rotor speed and gap settings: In impact crushers, rotor speed determines the kinetic energy of each blow bar to rock impact and is the primary operating variable affecting both production rate and wear rate. Running at the minimum rotor speed that achieves the required product size specification minimizes per tonne wear rate; running at higher speed than necessary increases energy consumption and accelerates wear without improving product quality. Verify that apron gap settings are within specification; excessively tight gaps increase the likelihood of large fragments jamming between rotor and apron, creating impact events that can fracture both blow bars and aprons simultaneously.

- Rotate blow bars at correct intervals: Horizontal shaft impact crushers are designed so that blow bars can be rotated 180 degrees or repositioned from leading edge to trailing edge orientation when one side has worn to the rotation limit. Rotation at the correct point equalizes wear between the two working surfaces of each blow bar, effectively doubling the total wear volume extracted from each bar compared to running to complete wear on one face before rotation. Delayed rotation results in uneven wear that reduces the usable wear volume and can create rotor imbalance that increases bearing loads and vibration.

Inspection and Wear Measurement Schedule

Systematic measurement of casting wear depth at regular intervals is the basis of effective replacement planning. Without quantitative wear data, replacement decisions are based on visual assessment alone, which tends to result in either premature replacement of parts with remaining service life (incurring unnecessary part cost) or delayed replacement of parts worn below their safe operating limit (risking mechanical damage to the host equipment).

Establish a wear measurement routine using calipers or ultrasonic thickness gauges that measures wear depth at defined reference points on each casting at regular inspection intervals (typically every 250 to 500 operating hours for heavily loaded crusher wear parts and every 500 to 1,000 hours for VRM grinding elements). Record these measurements in a tracking spreadsheet and plot cumulative wear versus operating hours. The resulting wear rate curve allows prediction of the remaining service life at any inspection point, enabling planned replacement to be scheduled during a convenient maintenance window rather than responding to an emergency breakdown caused by a worn out part.

Welding Repair of High Chromium Castings

High chromium white iron is difficult to weld by conventional methods because of its brittleness and high carbon equivalent, which promote cracking in both the weld deposit and the heat affected zone adjacent to the weld. However, hardfacing weld overlay using appropriate chromium carbide hardfacing electrodes or flux cored wire can be used to restore worn surfaces of thick section castings in situ, extending service life without the cost of full part replacement. The key requirements for successful hardfacing of high chromium iron castings are:

- Preheat to 400 to 500 degrees Celsius before welding to reduce the thermal gradient between the weld pool and the surrounding cold casting material, which is the primary driver of heat affected zone cracking in high carbon, high chromium irons.

- Use chromium carbide hardfacing consumables (not general purpose or austenitic stainless electrodes) that produce a deposit with wear properties comparable to the base casting material. A deposit from an unsuitable electrode that is softer than the surrounding base material will wear preferentially, negating the purpose of the repair.

- Control interpass temperature and allow controlled slow cooling after welding by wrapping the part in insulating blankets, to minimize thermal stresses during cooling that cause delayed cracking in thick section castings.

High chromium castings represent a technically mature and economically proven solution to the wear challenge in the most demanding industrial applications. The combination of selecting the appropriate chromium grade for the specific abrasive and impact conditions, specifying correct heat treatment parameters to maximize matrix hardness and toughness, applying best practice operational discipline to preserve casting integrity in service, and implementing systematic wear measurement and replacement planning produces the lowest total cost of ownership from high chromium wear parts across the full service life of crushing and grinding equipment.

Quality Control in High Chromium Casting Production

The performance consistency of high chromium castings in service depends on the rigor of quality control applied throughout their production. Unlike commodity steel products where composition and mechanical property ranges are tightly governed by widely adopted standards, high chromium white iron castings are frequently produced to proprietary or application specific specifications where the production quality controls applied by the foundry are the primary assurance of consistent performance. Understanding what quality controls should be specified and verified when procuring high chromium castings enables buyers to distinguish reliable sources from those producing inconsistent product.

Chemical Composition Verification

Each heat of high chromium iron should be analyzed before pouring using optical emission spectrometry (OES) on a sample taken from the ladle or furnace. The analysis must confirm that all specified alloying elements (chromium, carbon, molybdenum, nickel, and silicon) are within the target composition range before the heat is poured into molds. Heats outside specification should be corrected through alloy additions before pouring; pouring an out of specification heat in the expectation that it will be acceptable represents a significant quality risk because the consequences of incorrect composition on wear performance and heat treatment response may not be apparent until the parts are installed in service.

Buyers should require mill test certificates (MTC) showing actual ladle analysis for each production batch, rather than accepting generic grade certificates that confirm compliance with a standard specification without reporting the actual composition of the specific parts supplied. Comparison of MTC data across multiple orders allows trends in composition variation to be identified before they affect service performance, and provides the data needed to correlate composition variations with observed differences in service life between batches.

Hardness Testing After Heat Treatment

Every high chromium iron casting should be Rockwell hardness tested after heat treatment to verify that the required hardness has been achieved throughout the intended measuring zone. For most crusher and grinding mill wear parts, the specified hardness range is 58 to 66 HRC depending on the alloy grade and application. Hardness testing should be performed at a minimum of three locations per casting: two opposing working surface positions and one edge position. A casting that shows acceptable hardness on the working surface but significantly lower hardness at the edge positions indicates incomplete martensite transformation in regions of lower cooling rate during quench, which may produce preferential wear at those positions in service.

For large castings where section thickness variation may affect through thickness hardness distribution, destructive hardness traverse testing on samples cut from representative positions of prototype or first article castings establishes the hardness gradient across the section and verifies that the heat treatment achieves the minimum required hardness at all depths that will be exposed during the full service life of the part. This testing is particularly important for VRM grinding roller tires and table segments with sections exceeding 100 millimeters, where the core hardness after heat treatment is critical to performance as the surface wears and deeper material becomes the working surface over time.

Dimensional Inspection and Surface Quality

Dimensional conformance to the specified drawing is verified by measurement of all critical dimensions using calibrated gauges and templates. For castings that are finish machined after heat treatment (such as pump impellers, grinding ring segments, and precision wear plates), dimensional measurement after final machining confirms that the machining has achieved the required dimensional accuracy and surface finish. For castings that are used in the as cast or as ground condition, dimensional checks focus on the mounting and mating surfaces that determine correct fit and alignment in the host equipment.

Surface quality inspection covers both the visual appearance of the casting surface and non destructive testing for subsurface defects in critical applications. Visual inspection identifies surface breaking shrinkage porosity, cold shuts, hot tears, and significant surface roughness that indicate casting quality problems. For high consequence applications such as large VSI rotor shoes, VRM grinding elements, and components in critical process machinery, dye penetrant testing or magnetic particle testing of accessible surfaces provides additional confidence that no surface breaking cracks are present before the parts are installed in service. Cracks in high chromium iron castings do not self arrest as they might in ductile materials; a surface crack on a heavily loaded impact crusher wear part can propagate rapidly to catastrophic fracture under operating loads, making pre service crack detection a meaningful investment in both safety and production reliability.

English

English  русский

русский  عربى

عربى